I asked, You voted! ... Azure Digital Twin

it is.

Won't spend time on repeating the MS documentations on

'Set up an Azure Digital Twins instance and authentication (portal)', it's pretty straightforward. Azure portal, click, click, voila!

Please note the section that details how to

'Set up user access permissions'. In our case this was a showstopper as we did not had the

correct permissions and rights to set them. Thanks to some people with right rights permissions got sorted out.

Azure Portal is nice, but I get dizzy from it, so I prefer terminal for simple operations. Azure CLI! Well not so fast, to use

'az dt' commands we must

have 'azure-iot' extension in place. Once we have it, after

proper authentication, nothing stops us to 'az dt'.

... to properly check a command line tool for the first time we need to list things with it:

# az dt list

[

{

"createdTime": "2021-02-03T14:26:59.191311+00:00",

"hostName": "xxxx.api.neu.digitaltwins.azure.net",

"id": "/subscriptions/xxxx-xxxx-xxxx/resourceGroups/rg-dtwin-poc/providers/Microsoft.DigitalTwins/digitalTwinsInstances/dtwin",

"identity": {

"principalId": "xxxx-xxxx-xxxx",

"tenantId": "xxxx-xxxx-xxxx",

"type": "SystemAssigned"

},

"lastUpdatedTime": "2021-02-03T14:27:13.084718+00:00",

"location": "northeurope",

"name": "dtwin",

"privateEndpointConnections": [],

"provisioningState": "Succeeded",

"publicNetworkAccess": "Enabled",

"resourceGroup": "rg-dtwin-poc",

"tags": {},

"type": "Microsoft.DigitalTwins/digitalTwinsInstances"

}

]

Fantastic! We can see that we have a DT (Digital Twin), and now what?

... since you read through the page, behind the first link in this article, there is nothing new for you!

If you also eagerly checked out 'ADT explorer' mentioned there,

you might noticed that it is not an official product, but a sample implementation. That did not stopped me for a second, and I cloned

the repo, and started up the app. Also tried to use the sample Excel file to kickstart a test model ... but no luck, so I made

some notes, how a DT is described and structured through JSON:

{$dtId: "Floor1", $metadata: {$model: "dtmi:example:Floor;1"}}

{$dtId: "Floor0", $metadata: {$model: "dtmi:example:Floor;1"}}

{$dtId: "Room1", Temperature: 80, Humidity: 60, $metadata: {$model: "dtmi:example:Room;1"}}

{$dtId: "Room0", Temperature: 70, Humidity: 30, $metadata: {$model: "dtmi:example:Room;1"}}

Our best friend Mr. Terminal is back again: we create 2 Floors, 2 Rooms (with some properties), and make 2 relationships

between them. Since we don't trust commands blindly, we verify them with a query to be sure that everything is in order.

$ az dt twin create -n xxxx.api.neu.digitaltwins.azure.net --dtmi "dtmi:example:Floor;1" --twin-id Floor0

$ az dt twin create -n xxxx.api.neu.digitaltwins.azure.net --dtmi "dtmi:example:Floor;1" --twin-id Floor1

$ az dt twin create -n xxxx.api.neu.digitaltwins.azure.net --dtmi "dtmi:example:Room;1" --twin-id Room0 --properties '{ "Temperature": 70, "Humidity": 30}'

$ az dt twin create -n xxxx.api.neu.digitaltwins.azure.net --dtmi "dtmi:example:Room;1" --twin-id Room1 --properties '{ "Temperature": 80, "Humidity": 60}'

$ az dt twin relationship create -n xxxx.api.neu.digitaltwins.azure.net --relationship-id r1 --relationship contains --twin-id Floor1 --target Room1

$ az dt twin relationship create -n xxxx.api.neu.digitaltwins.azure.net --relationship-id r0 --relationship contains --twin-id Floor0 --target Room0

$ az dt twin query -n xxxx.api.neu.digitaltwins.azure.net -q "select * from digitaltwins"

Here is the part, where I'm sorry to say, but I forgot to take a backup of the query results, so I can't show them. After I created the

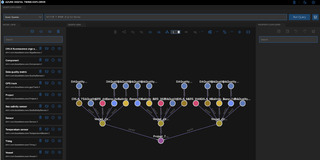

model, it was also possible to use 'ADT explorer' to query our DT. We could see a nice and shiny graphical representation of our model.

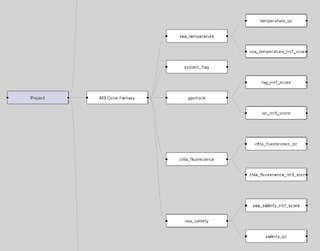

At my current company we created a demo platform, where we demo a shipping case. This shipping case includes a simple model, to

describe ships, sensors and things. At this point I cried for help for my partner in crime, who has much more detailed understanding of

that part. He is 'backend', while I'm 'frontend'; He is 'Python', while I'm JS.

If you cry for help, you better come with some links prepared:

import os

import sys

import logging

from azure.identity import DefaultAzureCredential

from azure.core.exceptions import HttpResponseError

from azure.digitaltwins.core import DigitalTwinsClient

try:

url = "https://xxxx.api.neu.digitaltwins.azure.net"

credential = DefaultAzureCredential(

exclude_environment_credential=True,

exclude_managed_identity_credential=True,

exclude_visual_studio_code_credential=True,

exclude_shared_token_cache_credential=True,

exclude_interactive_browser_credential=True

)

service_client = DigitalTwinsClient(url, credential)

model_id = "dtmi:com:basefarm:core:Vessel;1"

# Create logger

logger = logging.getLogger('azure')

logger.setLevel(logging.DEBUG)

handler = logging.StreamHandler(stream=sys.stdout)

logger.addHandler(handler)

# Get model with logging enabled

model = service_client.get_model(model_id, logging_enable=True)

print(model)

except HttpResponseError as e:

print("\nThis sample has caught an error. {0}".format(e.message))

From here, Mr. Python took over, exported the model from our demo system to JSON, and imported those to DT.

By this time I also got pretty familiar with the JavaScript APIs, so I extended my local test environment, to

query DT instead of our current backend. But that is an other story for an other time ...

...ADT is a tricky and complex beast. In my article I have mainly focused on our first Modelling efforts, and did not touched the IoT or the Storage aspect. If you read my previous article you are aware what I did around IoT, and we had similar efforts to discover Time Series Insights ...